Google’s AI agent, “Big Sleep,” has successfully identified and prevented the exploitation of a critical memory corruption vulnerability (CVE-2025-6965) within the SQLite open-source database engine. This marks a significant milestone, as it is believed to be the first time an AI agent has directly thwarted an active, in-the-wild exploitation attempt before malicious actors could capitalize on the flaw. Developed through a collaboration between DeepMind and Google Project Zero, Big Sleep had previously detected another SQLite vulnerability in late 2024, demonstrating its consistent capability in proactive cybersecurity.

Alongside this breakthrough, Google has published a white paper detailing its methodology for constructing secure AI agents, advocating for a hybrid defense-in-depth strategy. This approach integrates traditional, deterministic security controls with dynamic, reasoning-based defenses. The goal is to establish robust perimeters around the AI agent’s operational environment, effectively mitigating risks such as malicious actions resulting from prompt injection, while simultaneously ensuring the transparency and observability of the agent’s operations. This multi-layered security framework acknowledges the inherent limitations of relying solely on either rule-based systems or AI-based judgment for comprehensive protection.

Reference: https://thehackernews.com/2025/07/google-ai-big-sleep-stops-exploitation.html

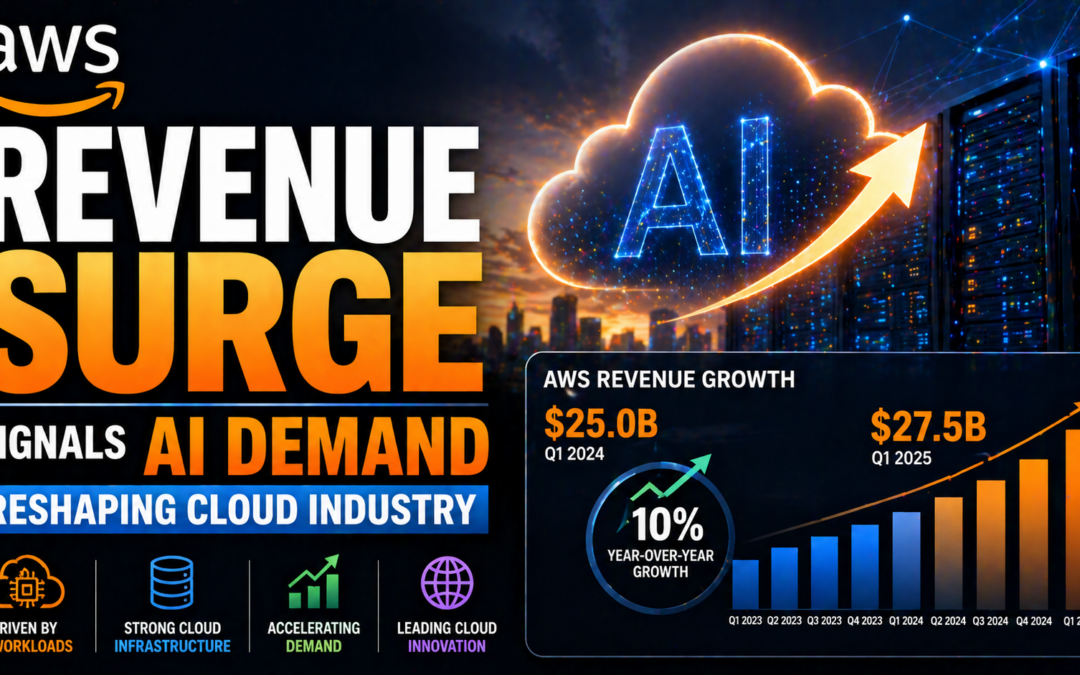

AWS Revenue Surge Signals AI Demand Reshaping Cloud Industry

Amazon’s latest earnings call highlights major momentum for Amazon Web Services (AWS), with cloud revenues climbing and capital expenditure escalating behind the scenes. The surge underscores the growing demand for generative AI workloads, fueling shifts in the...