As Google Cloud reports revenue surpassing $20 billion but flags “capacity constraints” as a growth bottleneck, the implications for AI-driven businesses, developers, and enterprise adopters are significant. The intersection of cloud infrastructure scalability and the booming demand for large language models (LLMs) and generative AI puts Google’s services under a unique spotlight.

Key Takeaways

- Google Cloud revenue exceeded $20 billion, signaling robust enterprise adoption of AI and cloud services.

- Management cited “capacity constraints”—limited infrastructure resources—as a primary factor slowing growth.

- Explosive interest in LLMs and generative AI tools continues straining hyperscaler platforms.

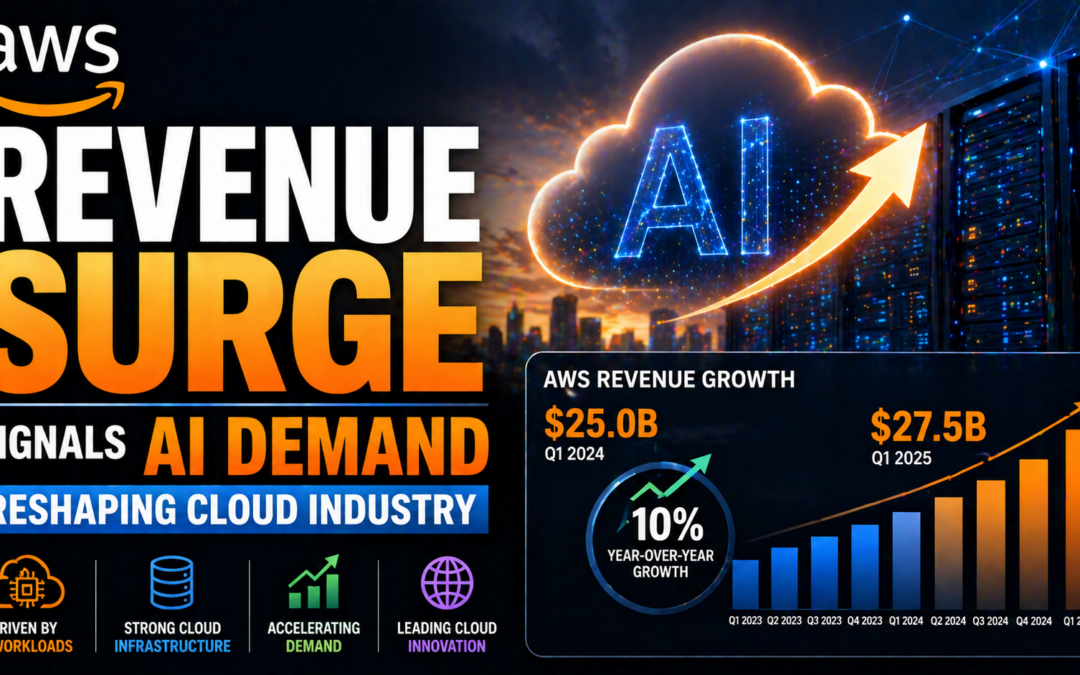

- Competitors like Microsoft, AWS, and Oracle are accelerating investment in GPU capacity to capture AI demand.

- Developers, startups, and AI professionals may see resource allocation delays and shifting partnership strategies.

Cloud Scalability: A Bottleneck for Generative AI

The surge in enterprise AI adoption has outpaced even the hyperscalers’ ability to provision infrastructure, with compute and GPU availability now shaping the ecosystem’s competitive landscape.

According to TechCrunch and corroborated by CNBC and The Register, Google Cloud’s first quarter saw >28% year-over-year growth, hitting $20.3 billion in revenue. However, executives including Alphabet’s Ruth Porat and Sundar Pichai underscored that demand for AI workloads—especially training and inference for LLMs—has been so high that hardware limitations hindered even more aggressive expansion.

Implications for Developers & Startups in the AI Era

These infrastructure bottlenecks have meaningful consequences. For developers, especially those integrating or building with AI APIs, limited cloud capacity may result in:

- Longer provisioning times for high-end GPU clusters

- Potential pricing increases as capacity becomes a premium commodity

- Greater competition for slotting large jobs or hosting complex LLMs

Startups and independent AI practitioners competing with major enterprise clients might face further delays or need to diversify across providers (including AWS, Azure, or new entrants like Oracle Cloud advertised as having excess Nvidia capacity).

Every hyperscaler now faces a dual mandate: expand physical infrastructure to match generative AI demand, and build software platforms that maximize every GPU hour.

Enterprise AI Adoption: A Race for Compute

Across the sector, demand for compute resources shows no sign of abating. Microsoft recently reported record Azure growth with similar notes about constrained GPU supply. AWS has accelerated pushes into custom silicon with Trainium and Inferentia, while Oracle advertises “plentiful Nvidia H100s” to poach customers facing allocation limits elsewhere (CNBC, The Register).

This environment incentivizes resource-efficient AI models, hybrid/multi-cloud deployment architectures, and increased use of AI orchestration tools that can intelligently allocate jobs to available resources across clouds.

Strategic Recommendations for AI Builders

- Benchmark cloud providers on both performance and provisioning speed, especially for high-capacity jobs.

- Adopt multi-cloud or hybrid strategies to avoid single-provider bottlenecks and hedge against price hikes.

- Optimize code and model architectures for resource efficiency—smaller, distilled models may deliver better ROI during ongoing constraints.

- Explore scheduling, queuing, and orchestration solutions built for distributed, burstable AI workloads.

In the AI-native era, access to compute—not just algorithms—will decide who leads and who lags.

Conclusion

Google Cloud’s $20B+ milestone underscores the scale of enterprise AI investment. The key blocker isn’t a lack of customer interest—but the challenge of keeping up with hardware and infrastructure to fuel a generative AI boom. Developers and startups working in AI must treat resource management and deployment strategy as central pillars of long-term competitiveness.

Source: TechCrunch