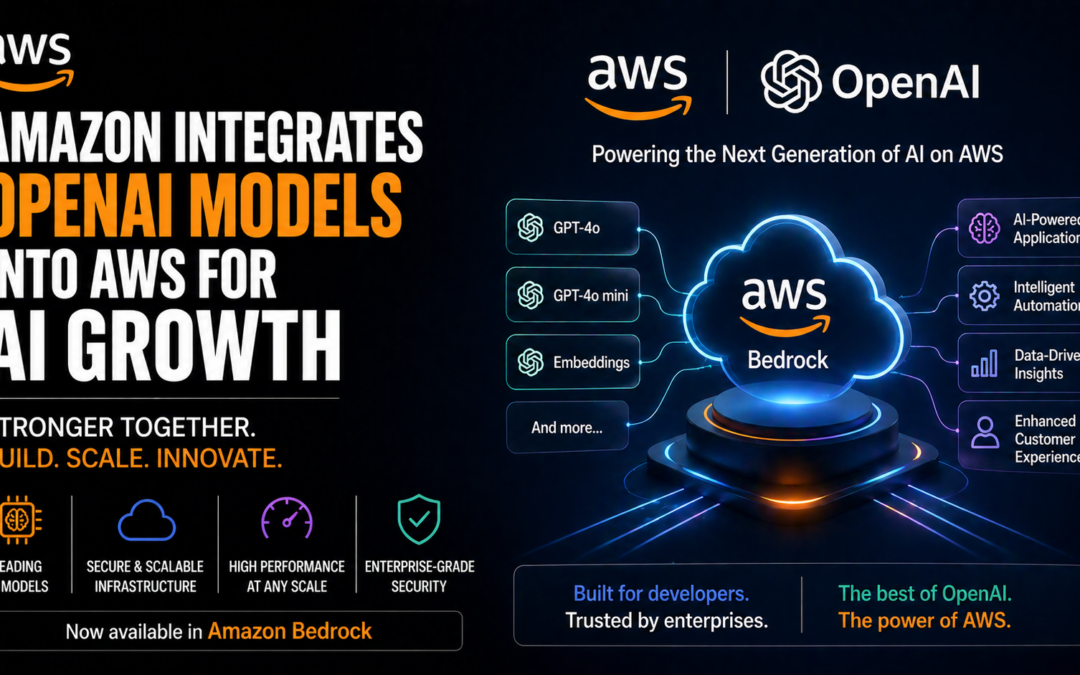

- Amazon rapidly integrates new OpenAI products into AWS, accelerating generative AI adoption.

- Enterprise AWS users now access OpenAI models, including GPT-4o, as fully managed API endpoints.

- Amazon’s move strengthens its AI platform, positioning AWS as a leading marketplace for cutting-edge LLMs.

- Developers, startups, and AI-focused businesses gain streamlined access to the latest generative AI tools.

Artificial intelligence innovation is accelerating, and Amazon is amplifying the trend by rolling out the latest OpenAI models on AWS. This timely integration empowers developers and enterprises to quickly leverage advanced LLMs like GPT-4o and unlock new possibilities in application development, automation, and data intelligence.

Key Takeaways

- Immediate access to OpenAI’s advanced models via Amazon’s Bedrock platform equips businesses with the latest in generative AI.

- AWS strengthens its value as a turnkey AI infrastructure and marketplace for the world’s top language models.

- The partnership signals heightened competition among cloud providers to offer premium AI model access.

Amazon Accelerates AI Integration with OpenAI on AWS

According to multiple reports, including TechCrunch and The Verge, Amazon’s AWS is enabling customers to deploy the latest OpenAI generative AI models—including GPT-4o—directly from its Bedrock platform. Customers no longer need to depend on OpenAI’s own API channels; AWS abstracts complexity through fully managed endpoints built for scale, compliance, and enterprise security.

This gives developers immediate, global-scale access to state-of-the-art AI models—without infrastructure headaches or compliance risk.

Disruptive Implications for Developers and Startups

Direct access to GPT-4o through AWS Bedrock allows AI professionals to build, iterate, and deploy generative AI-driven products faster than ever. Startups in search, healthcare, and fintech benefit from streamlined prompt engineering and integration, while established enterprises minimize model operations overhead.

Industry experts, including ZDNet, emphasize this access democratizes high-grade machine learning models and levels the playing field for smaller tech firms and independent AI architects.

“AWS customers can now tap into the world’s leading LLMs with enterprise-grade security, reliability, and scalability, fueling a new wave of generative AI applications.”

Competitive Landscape and Real-World Use Cases

As AWS adds OpenAI’s newest flagship models, competition intensifies with Microsoft Azure (OpenAI’s longtime partner) and Google Cloud—both also touting access to premium LLMs. However, Amazon’s Bedrock platform distinguishes itself by supporting a variety of foundational models (including Anthropic Claude, AI21, and Stability AI), giving users unparalleled flexibility.

For AI professionals, this lowers integration barriers, optimizes deployment options, and enables multicloud innovation strategies. Real-world applications already emerging include smarter chatbots, advanced insights in document processing, legal tech automation, and personalized customer engagement at scale.

AWS’s move signals a new chapter for AI democratization, making high-performance generative models accessible to organizations of all sizes and technical skillsets.

Outlook: What’s Next for AI on AWS?

Developers and startups should expect continued rapid rollout of emerging LLMs across cloud marketplaces. Amazon’s investment in Bedrock signals deepening commitment to AI-first services, streamlined APIs, and supporting tools for prompt tuning, data privacy, and model customization. The race to deliver the best generative AI infrastructure is on—with AWS aggressively claiming a leadership position.

Source: TechCrunch