The rapid growth of generative AI, cloud computing, and large language models (LLMs) is causing data center energy consumption to surge at a dramatic rate.

According to new industry projections, global data center energy demand is on track to increase nearly 300% by 2035—a stark warning for developers, startups, and tech businesses building AI-powered solutions and infrastructure.

Key Takeaways

- Analysts forecast global data center energy usage will nearly triple between now and 2035, driven primarily by AI and LLM workloads.

- Generative AI’s growing computational demands now rival the power requirements of small countries.

- This energy surge raises concerns about infrastructure, sustainability, and operational costs for AI-driven businesses.

- AI professionals, cloud providers, and innovators must prioritize energy efficiency, renewables, and responsible scaling.

- Governments and industry leaders are investing in greener technologies, but immediate action is needed to avoid bottlenecks and blackouts.

AI Boom Triggers Extraordinary Power Demands

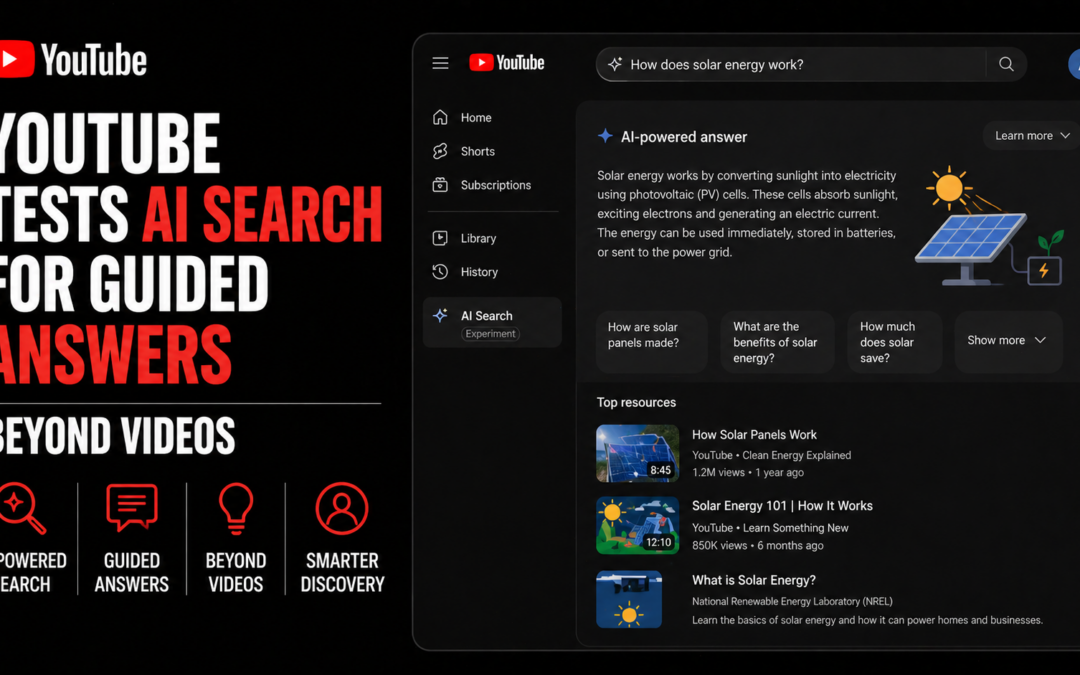

TechCrunch reports that the explosive adoption of generative AI models—especially LLMs powering advancements in conversation, search, and productivity tools—has placed unprecedented strains on data center energy grids worldwide.

Citing new research by the International Energy Agency and McKinsey, the combined computational needs of training and running cutting-edge AI models could push total global data center consumption past 2,000 terawatt-hours by 2035.

For context, this figure nears the current annual electricity usage of Japan.

Generative AI is transforming innovation but also reshaping energy economics across the global tech stack.

What This Means for Developers and Startups

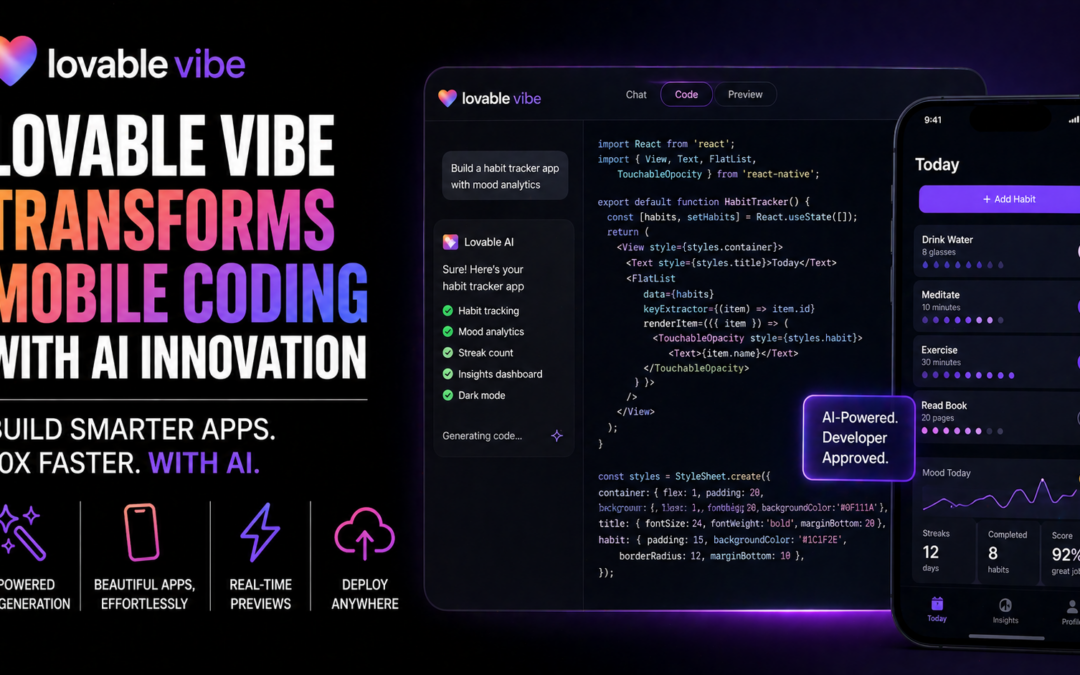

For developers and AI professionals, rising data center costs introduce new variables into product planning, model deployment, and infrastructure choices.

Those optimizing LLM workflows for enterprise or consumer apps now face a direct correlation between compute usage and both operational costs and environmental impact.

Key Considerations:

- Choosing the right cloud provider and region can affect reliability and sustainability.

- Tech stacks must increasingly prioritize energy-efficient models and chip architectures (such as ARM and AI-specific ASICs).

- Green cloud offerings and carbon-aware programming are gaining traction as mainstream selection criteria.

Real-World Impact: Risk and Opportunity

Exponential energy requirements expose new risks for disruption. Data center expansions often run into local grid bottlenecks, with municipalities as varied as Dublin and Northern Virginia pausing or reviewing data center projects due to lack of power capacity or grid stress.

The scalability of future AI systems depends on finding innovative ways to decouple progress from energy consumption.

This challenge opens opportunities for startups working on efficient inferencing, improved cooling, renewable-powered hosting, and edge AI—all of which can help mitigate soaring energy requirements while supporting rapid innovation.

How the Industry is Responding

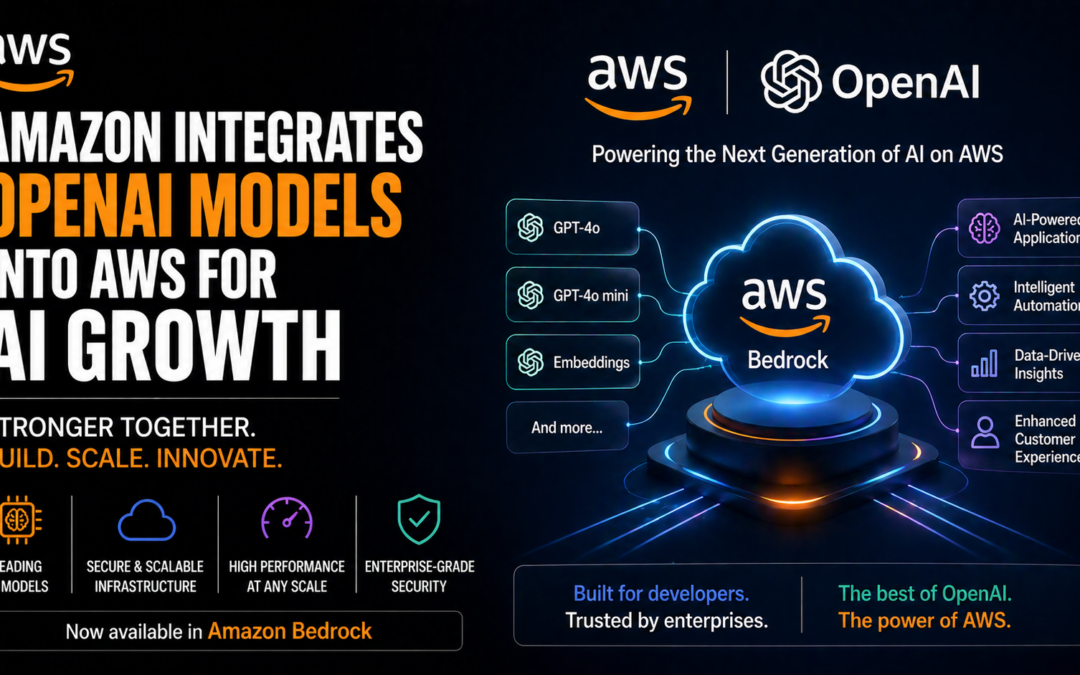

Leading hyperscalers (including Google, AWS, and Microsoft) are racing to build new data centers powered by wind, solar, and other renewable sources.

Simultaneously, governments are updating efficiency standards and investing in technologies like liquid cooling and AI-driven energy optimization.

According to Reuters, Microsoft recently unveiled plans for multi-billion dollar investments into European data centers, heavily emphasizing green energy sourcing.

Meanwhile, The Wall Street Journal highlighted that some U.S. utilities now prioritize data center grid upgrades on par with municipal infrastructure.

Outlook: Innovation Hinges on Responsible Scaling

The next decade will define whether the AI revolution can succeed sustainably. Developers and businesses that proactively address efficiency and resilience will set themselves apart as responsible leaders in a rapidly changing landscape.

AI’s benefits and risks now extend to the world’s energy future, making sustainable growth not just a best practice—but a business imperative.

Source: TechCrunch