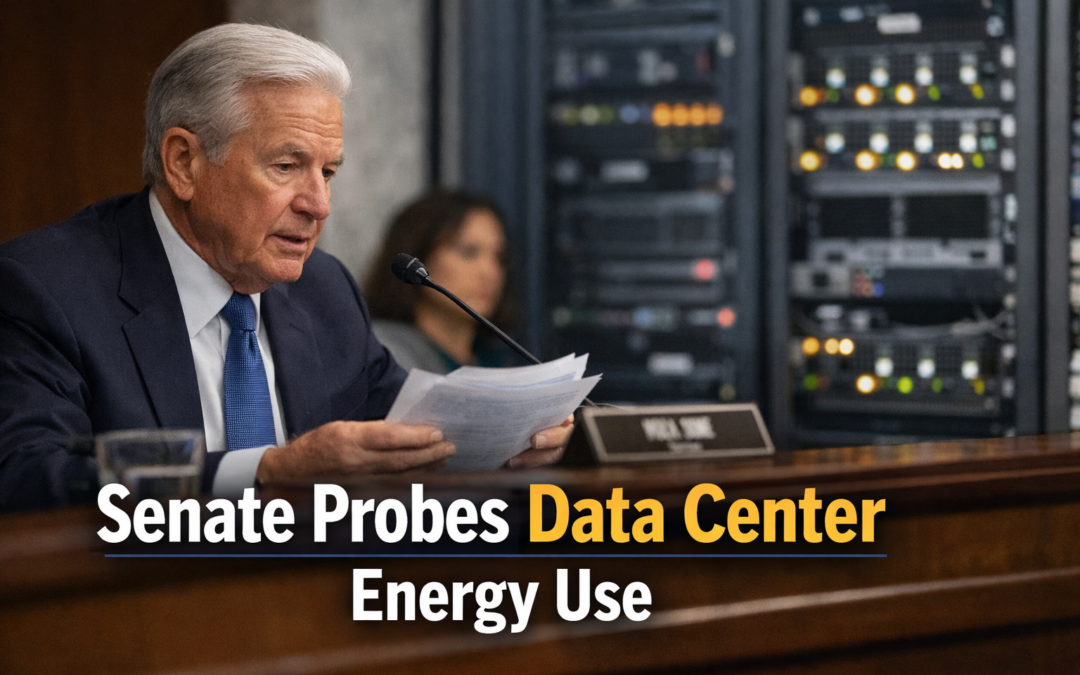

- U.S. Senate launches investigation into data center energy consumption and sustainability.

- Cloud giants and hyperscalers must publicly disclose power usage and related emissions.

- AI growth, especially large language models (LLMs), drives urgent scrutiny of data center impact.

- Developers and startups may face stricter compliance for cloud-powered AI applications.

- Legislative focus signals a major shift in global attitudes toward responsible generative AI deployment.

The U.S. Senate’s new oversight campaign zeros in on data center energy footprints, targeting cloud providers like Amazon Web Services, Google Cloud, and Microsoft Azure. As generative AI and LLMs eat up ever greater compute, lawmakers are demanding transparency and action. This Senate inquiry brings into sharp focus the growing line between relentless AI innovation and real-world resource sustainability.

Key Takeaways

“As AI ambitions soar, regulatory scrutiny of data center sustainability becomes inevitable.”

- Senate committees, led by prominent policymakers, have officially requested disclosures from major data center operators on electricity usage, water consumption, and carbon mitigation strategies.

- Public concern centers on energy spikes fueled by rapid generative AI adoption, with large LLMs like GPT-4 driving up power and cooling needs globally, according to reporting from The New York Times.

- Europe and Asia have trialed similar oversight, but U.S. policy may set a new benchmark for transparency and standards in the AI era.

Implications for AI and Cloud Stakeholders

“AI professionals must now build with not just performance, but power accountability in mind.”

The AI community faces direct impacts, especially those relying on cloud-hosted LLMs:

- Developers: API users and AI app builders may see higher operational costs or restrictions based on their computational footprint and emissions tied to their usage patterns.

- Startups: Early-stage ventures could gain an edge by adopting energy-efficient model architectures or sustainable deployment strategies—now a differentiator in funding rounds and enterprise procurement.

- AI Professionals: Expect demand for “green AI” expertise and optimized inference at scale to grow rapidly. Efficient model serving, serverless inference, and strategic model selection will matter more than ever, as highlighted in Forbes analysis.

Regulatory Trends and Future Prospects

The Senate’s investigation aligns with a global push for climate-responsive AI development. Several large data center operators have already experimented with 24/7 renewable energy tracking and water-saving cooling technologies. U.S. legislative action will likely accelerate best practices and force lagging providers to catch up or risk reputational and regulatory fallout. This movement builds context with similar data center power crackdowns happening in the EU, as covered by The Financial Times.

“Public reporting on energy use may soon be as critical as benchmark testing for hyperscale AI players.”

Industry analysts anticipate that in the next 12-18 months, transparent sustainability metrics will be table stakes for hyperscale cloud platforms, impacting both North America and global markets.

Conclusion

The new Senate inquiry into data center sustainability and AI energy use marks a transformative moment for generative AI and cloud infrastructure. Developers and tech organizations should prepare for rapidly evolving compliance, emphasizing not just scale and speed but long-term stewardship of resources.

Source: TechCrunch